The Virtual World is Now Real via 3D Earth Observation

If you want to improve your company’s data visualization capabilities in the area of 3D Earth observation, you better get in the game.

More specifically, you need to explore Epic Games’ Unreal Engine. The maker of Fortnite, which gifted the Floss and the Electro Shuffle to the world, is also the leading provider of the infrastructure necessary to produce and support cutting-edge visualizations of real-world data.

The Unreal Engine, which was developed in 1998 for Epic Games’ first-person shooter Unreal, is now the cornerstone of Epic’s business. It is the most advanced 3D image rendering and editing software program and it is the backbone of many popular games, and increasingly other industries are using it too.

If you watched The Mandalorian, Westworld, or the live-action remake of The Lion King, you did so courtesy of the Unreal Engine, which Epic has made available to developers via GitHub.

Now, we’re using it too.

Over the last few months, Terris’s developer team has been busy integrating the engine into our 3D visualization platform.

We’re not the only ones. The Open Geospatial Consortium, the global standards-setting organization for geospatial data, has been working with Epic Games too and I’ve been on a few Zoom calls over the last few weeks that suggests to me that the Engine will soon be powering real-life applications.

Welcome to the Metaverse, the long-envisioned space that will merge real-life physical spaces with augmented, virtually enhanced spaces.

It’s the stuff of science fiction, first entering our collective imaginations in Canadian science fiction author William Gibson’s 1984 novel Neuromancer. He called it cyberspace, a term that caught on and became the overarching term for the Internet. Author Neal Stephenson coined the newer word – Metaverse – in his 1992 novel Snow Crash, in which people enter a virtual reality as avatars, interacting with each other and software agents.

That sounds about right.

While Terris Pilot, our 3D visualization platform, won’t be popping up on screens looking like a Jedi knight (at least not yet), what we are building shares a lot of similarities with the virtual world Gibson and Stephenson envisioned in the latter years of the 20th century.

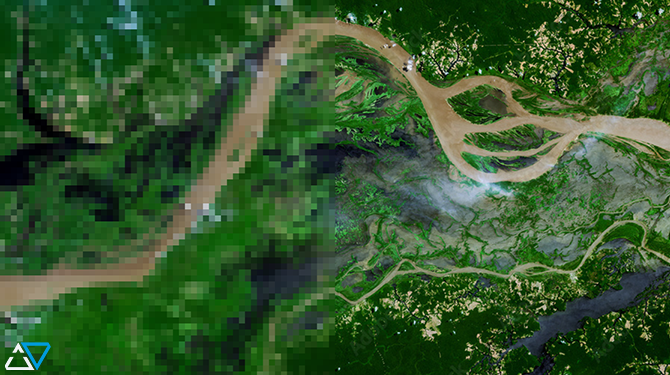

Our 3D visualization is immersive, fusing 2D satellite and aerial images of the earth to create volumetric 3D images. Imagine a digital map, but instead of seeing the earth in 2D or simulated 3D, you saw an actual 3D image. That’s Terris’s starting point. Then we layer in additional data, such as geospatial thematic layers, environmental data, historical imagery, and 3D infrastructure models, to name a few. What you’ll see is an enhanced map of the part of the world you want to understand.

The real world + all of the data now being collected about it.

Welcome to the metaverse; it’s a whole new world.